Neural networks were first imagined in 1943, but they’ve only become the backbone of modern artificial intelligence over the last several years. These fascinating systems mirror our brain’s structure to process information and solve complex problems.

The way machines handle tasks that once needed human expertise has changed dramatically because of neural networks. These powerful systems now complete speech and image recognition tasks in minutes – work that would take human experts hours to finish. Neural networks have evolved from the simple Perceptron in 1957 into sophisticated systems with up to 50 layers, especially when you have modern deep learning applications.

This piece breaks down neural networks’ operation and explores different types – from feedforward to convolutional networks. You’ll also see their crucial role in AI applications like ChatGPT and DALL-E. The fundamentals of neural networks become clear through this plain English explanation, whether you’re interested in AI technology or want to understand computing’s future. Technical jargon won’t get in your way as you learn about these remarkable systems.

The AI Revolution in Your Daily Life

AI technology quietly blends into your daily activities without you noticing. Most people interact with AI systems many times each day as these sophisticated systems learn and adapt to how you behave.

From Siri to Netflix: AI You Already Use

Your iPhone’s Face ID works with AI that places 30,000 invisible infrared dots on your face to create a detailed 3D image. The technology matches this scan with stored data to verify who you are before letting you in.

Siri and Alexa have become our essential digital companions. They do more than repeat your words – they understand context through natural language processing. These AI systems learn from your past interactions and remember your priorities. They turn your speech into text, figure out what you want, and mix different types of data like location, time, and your history to give you relevant answers.

Netflix uses powerful AI to look at over 200 billion events daily. The platform studies what you watch to predict shows you might like next. Research shows that AI-powered recommendations drive 80% of what people watch on Netflix. The system looks at your viewing history, when you watch, and even reads scripts and plots of hit shows to find what appeals to audiences.

Uber uses AI to predict how busy it will get and sends drivers where they’re needed based on past data and immediate conditions. Navigation apps use machine learning to spot building edges, understand traffic flow, and read road numbers to find the fastest routes.

The Hidden Neural Networks Behind Everyday Tech

Neural networks power these everyday apps – they’re the heart of modern AI. These networks process data like our brains do, using connected units called artificial neurons. Each neuron does simple math that adds up across thousands or millions of nodes to make complex decisions.

Convolutional neural networks in your phone’s camera spot faces for filters and photo sorting. These networks are great at finding patterns by processing visual data through multiple layers. Language networks help Grammarly catch grammar mistakes and suggest better wording through natural language processing.

Social media platforms use neural networks to customize your feed based on what you do. AI algorithms on Instagram and Facebook look at your likes, shares, and comments to decide what content you see first. These systems keep learning as your interests change.

Recommendation systems on streaming and shopping sites use neural networks to predict what you like by tracking your online activity. Machine learning analyzes this data to suggest things that match your interests. Neural networks can make sense of unclear or partial data while keeping their other features.

People benefit from neural networks in countless ways – from convenient ridesharing and email sorting to complex tasks like medical diagnosis and self-driving cars. These networks get smarter and faster, changing how we use technology every day.

Neural Networks: The Engine Powering Modern AI

Image Source: The Scientist

Traditional computers follow explicit instructions and execute predetermined steps to solve problems. Neural networks work on a completely different principle that has changed the computing world.

What Makes Neural Networks Different from Other Computing

Neural networks represent a radical alteration from conventional programming methods. Traditional computing systems work through explicit programming. Every scenario must be predicted and coded in advance. These systems excel at tasks with clear rules but struggle with complex, unstructured data.

Neural networks learn from experience instead of following predefined instructions. They adjust their internal parameters based on the data they process and improve performance over time. This learning capability helps them handle tasks that machines couldn’t do before, like pattern recognition in images, speech, and text.

The difference in information flow sets these systems apart. Traditional programs process data one after another. Neural networks compute through interconnected nodes in parallel. This parallel processing works like our brains and helps neural networks to:

- Process big datasets and identify complex patterns

- Adapt to new information without complete redesign

- Handle unstructured data like images and natural language

- Make predictions based on learned patterns

Google’s search algorithm shows this capability. It uses neural networks to give relevant results in milliseconds. These systems complete speech and image recognition tasks in minutes rather than the hours needed for manual processing.

Why They’re Called ‘Neural’ Networks

The name “neural” makes sense—these networks get their name from looking like the human brain’s neural structure. They might be simpler than biological systems, but they copy everything in how neurons communicate.

Brain neurons receive signals, process them, and fire responses to other neurons. Each artificial neuron in a network does the same:

- Receives weighted inputs from connected nodes

- Processes this information through an activation function

- Transmits outputs to the next layer when values exceed certain thresholds

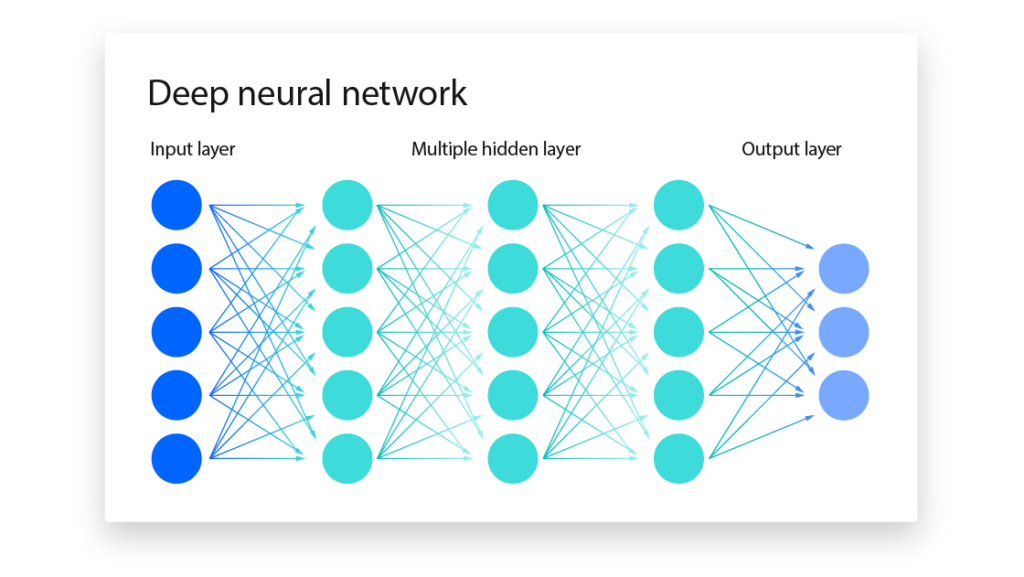

The simple structure has an input layer that receives data, one or more hidden layers that process information, and an output layer that produces results. Data enters the network, and each node applies a mathematical operation to decide whether to “fire” and pass information forward.

This design explains why neural networks show abilities like human thinking. They learn, adapt, and make decisions much like our brain’s flexibility. Their parallel processing structure helps them find patterns in data that would be impossible to program directly.

How Neural Networks Learn from Data

mage Source: Dreamstime.com

Humans learn from years of life experience, while neural networks gain knowledge through a training process. Raw data becomes useful information through systematic steps in this computational learning method.

The Training Process: Teaching AI Through Examples

Neural networks learn through backpropagation, a training algorithm that makes gradient descent work for multi-layer networks. The system starts with random weights connecting neurons. The network calculates the difference between predicted and actual outputs as training data flows through. Weight adjustments minimize this error. This process works like a mountain climber who takes small steps down the steepest path to reach the lowest point.

Each complete pass through all training data is called an epoch. The learning rate—a key hyperparameter—determines the size of each adjustment step. The network might miss optimal values if the rate is too high, while a rate that’s too low makes training drag on.

Why More Data Usually Means Better Results

Neural networks work better with lots of training data. They can “let the data speak for itself” instead of relying on assumptions or weak patterns. More data helps networks find real patterns rather than just memorizing noise.

Task complexity determines how much data you need. Simple tasks like handwritten digit classification might work with a few thousand examples. Complex tasks like natural language processing need millions of samples to be accurate enough.

Data quality is just as important as quantity. Noisy or poorly labeled datasets need more samples to make up for inaccuracies. Data augmentation can help by creating variations of existing samples, though these methods have their limits.

When Neural Networks Make Mistakes

Overfitting is a common way neural networks fail. The network learns training data too well but can’t handle new examples. It’s like memorizing test answers without understanding the concepts.

Other common errors include:

- Underfitting: Models that are too simple to catch underlying patterns

- Vanishing gradients: Gradients become too small during backpropagation, so lower layers can’t learn well

- Dead ReLU units: Neurons stop helping the network when their inputs drop below zero

Dropout regularization and batch normalization help networks learn better by randomly “dropping” neurons during training. Good data preprocessing substantially affects training success by handling missing values and outliers properly.

Popular Types of Neural Networks in Today’s Technology

Neural networks come in different varieties, each built for specific tasks in our modern technology ecosystem. These specialized networks power the intelligent features we use daily, from photo sorting to voice recognition.

Image Recognition Networks in Your Phone’s Camera

Convolutional Neural Networks (CNNs) act as the vision system behind your smartphone’s camera intelligence. These networks excel at image classification, object detection, and pattern recognition through linear algebra principles that detect visual patterns. We used these networks in advanced applications like facial recognition and optical character recognition. CNNs break down images into smaller sections called feature maps that capture specific visual elements.

Your phone’s camera uses CNNs to identify faces, sort photos by content, and apply filters based on scene recognition. This technology makes features like portrait mode possible, where the AI spots a person’s face and applies beautification filters to boost their appearance. Modern smartphones use AI to identify many scenes and adjust exposure, focus, and color balance settings.

Language Networks in Virtual Assistants

Recurrent Neural Networks (RNNs) and Transformer models are the foundations of virtual assistants like Siri and Alexa. These networks process sequential data, which makes them perfect for understanding speech and language context. These systems turn your speech into text, identify your intent, and create relevant responses.

Virtual assistants make use of Natural Language Processing (NLP) to break down language barriers. Some systems can detect and translate messages in different languages. NLP-powered chatbots now resolve customer queries about 70% of the time without human help.

Recommendation Networks in Your Streaming Services

Deep learning recommendation systems study your viewing habits to suggest content you might enjoy. Netflix uses reinforcement learning to adjust its recommendations based on what you watch, skip, and rate. The system watches your interactions and rewards suggestions that lead to positive outcomes, like finishing a series.

YouTube’s recommendation networks look at your search history and time spent on content. These complex systems have a simple goal: they keep you watching by showing content that matches your priorities.

Building Your First Mental Model of a Neural Network

Image Source: IBM

You can learn how neural networks work without complex math by looking at a busy restaurant. This simple comparison helps explain what is a neural network in AI through everyday situations we all understand.

The Restaurant Analogy: Orders, Kitchens, and Meals

Picture yourself walking into a restaurant with several cooking stations. The ingredient station at the entrance (input layer) holds raw materials – vegetables, spices, and proteins. These ingredients work just like data points that feed into a neural network’s input layer.

The cooks at different stations (hidden layers) turn these ingredients into partial dishes. Each cook uses specific recipes with exact measurements, similar to how neurons apply weights to their inputs. Some ingredients play a bigger role than others, just like neural networks give different importance to various inputs.

Quality testers (activation functions) review each preparation before it moves to the next station. A dish moves forward only if it meets certain standards. This works the same way activation functions decide if neurons should “fire” and send information ahead.

The chef brings all components together into finished meals (output layer) ready to serve. Customer feedback helps improve future cooking – exactly how backpropagation fine-tunes a neural network’s performance.

The Network in Action: Following Information Through Layers

Data first hits the input layer where each node stands for a single data point. This information flows through weighted connections to hidden layers that process everything.

Each neuron in these hidden layers follows these steps:

- Inputs multiply by their weights

- Results add up with a bias value

- The sum goes through an activation function

- The output becomes input for the next layer

This feedforward propagation continues until it reaches the output layer. The network then produces its final result – whether it classifies an image, predicts a value, or generates text.

Neural networks get their strength from neurons working together across layers. This teamwork lets them spot complex patterns that regular programming could never find.

The Future and Limitations of Neural Network AI

Neural networks have remarkable capabilities but face the most important limitations that affect their current applications and future development.

Current Boundaries of What Neural Networks Can Do

Neural networks have a fundamental problem with generalization—they excel at specific tasks but struggle when they encounter scenarios outside their training data. This creates a paradox in critical situations where mistakes can lead to serious consequences. These systems need massive amounts of labeled data to learn properly, which makes them impractical when information is limited.

We face another crucial limitation because these networks don’t detect causation. They can identify correlations in big datasets, but they can’t understand qualitative relationships between variables, such as causality and hierarchy. These systems also fail in dynamic environments—changing conditions usually mean complete retraining.

The “black box” nature of neural networks creates one of their toughest challenges. Users and developers often can’t explain how or why they generated a particular output. This lack of transparency makes neural networks unsuitable for applications that need interpretability, like credit approval processes where customers deserve explanations for decisions.

Ethical Considerations and Challenges

Ethics in neural network development affects multiple areas. Bias and fairness top the list. These systems can inherit and increase biases from training data, which leads to discriminatory outcomes. Privacy becomes a concern as AI systems process huge amounts of personal information.

Accountability creates another challenge—we need clear frameworks to establish who’s responsible when AI systems cause harm. These concerns have prompted more than 60 countries to develop national AI strategies that balance innovation with proper safeguards.

What’s Coming Next in Neural Network Development

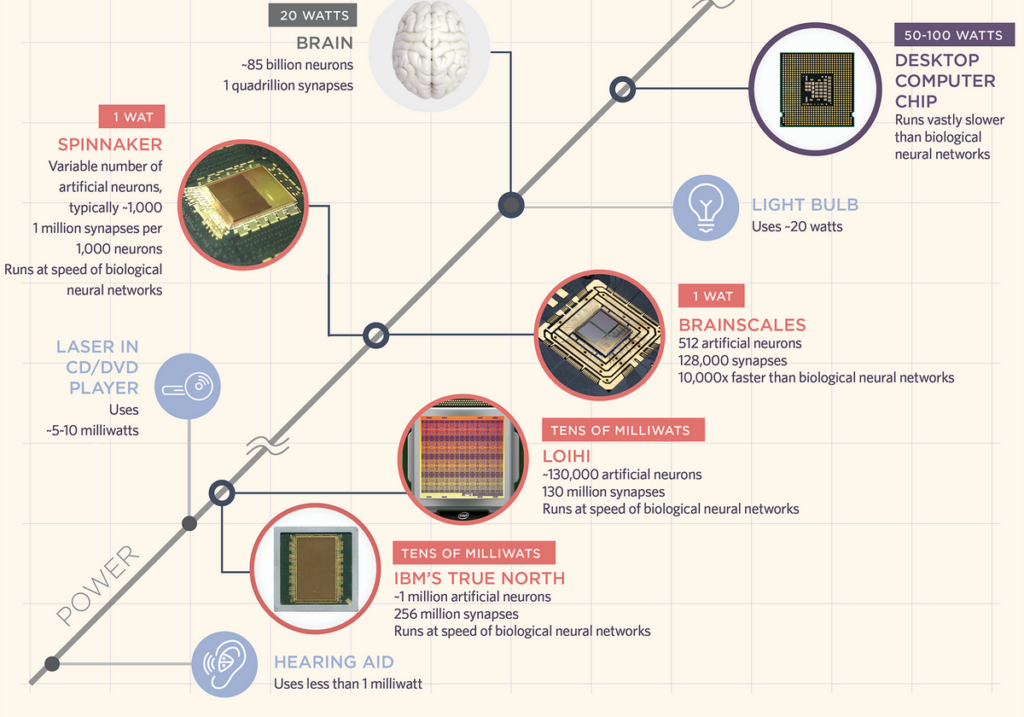

Future neural networks will likely combine with other AI techniques through hybrid models and explainable AI. Scientists are learning about optical neural networks (ONNs) as a new computing approach that might overcome current limitations in computing power and energy use.

Smaller, more efficient models are emerging with large-scale systems. OpenAI and Meta are developing models that do similar tasks with fewer resources. Kolmogorov-Arnold Networks (KANs) show promise in making networks more understandable and potentially more efficient as they grow.

Conclusion

Neural networks have grown from basic conceptual models in 1943 to advanced systems that power many applications we use every day. This piece shows how these remarkable systems mirror our brain’s structure and handle complex tasks that once needed human expertise.

These networks quietly operate behind the technology we use daily – from Face ID that unlocks our phones to Netflix that suggests our next favorite show. They differ from traditional computing because they learn and adapt instead of following strict programming rules.

CNNs for image recognition and RNNs for language processing showcase how versatile these networks are in different applications. Despite their power, these systems have real limitations, particularly in generalization and transparency. Research continues to produce more efficient and interpretable models through breakthroughs like optical neural networks and hybrid approaches.

Neural networks will shape technology’s future significantly. Their progress might solve current challenges and create new possibilities in healthcare and environmental protection. Knowledge of these AI building blocks prepares us for a future where humans and AI work together more often.